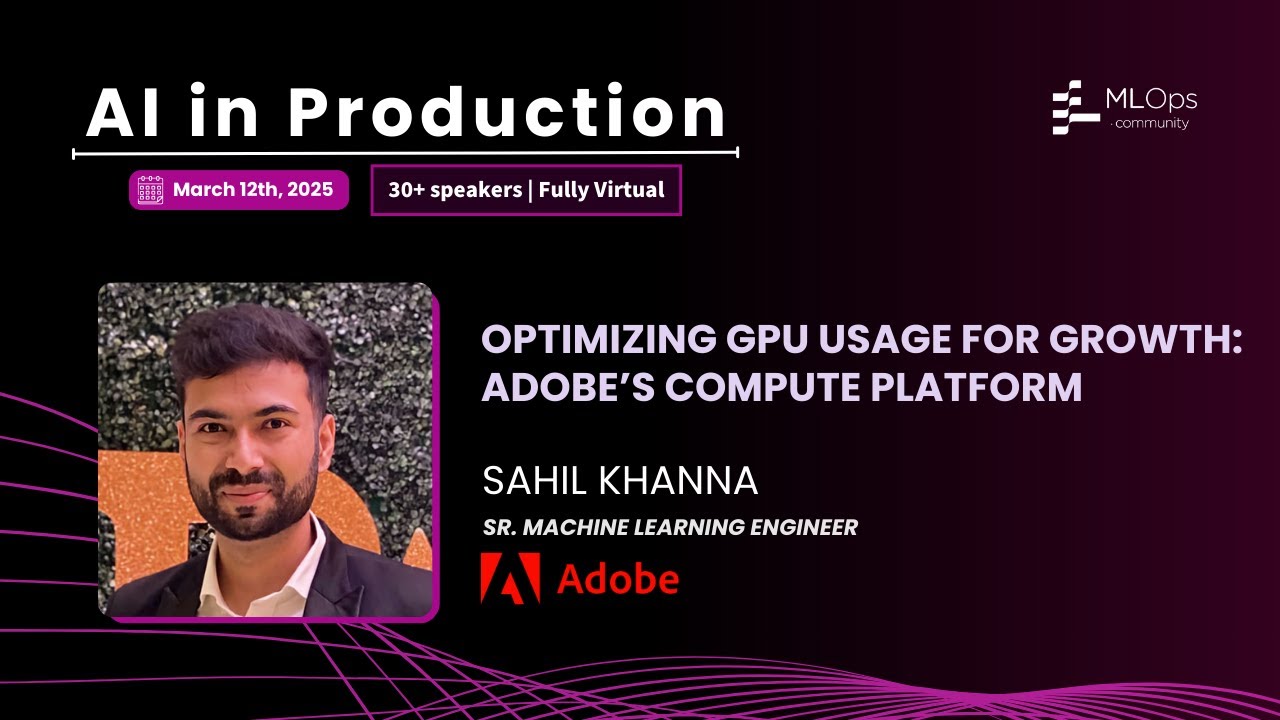

Accelerating Growth Through Optimizing GPU Usage // Sahil Khanna // AI in Production 2025

Adobe's journey in building a sophisticated AI Compute Platform to tackle the immense challenges of GPU optimization for training large-scale generative models like Firefly. The talk covers their custom-built solutions for resource management, developer productivity, and automated fault tolerance.